Spoiler alert: Godot never shows up. And the whale wins, whatever Ishmael says. There are vampires in the film Sinners, and like, it’s a metaphor! Hmmm, how else can I ruin your day? Solyent Green is made out of people? Darth Vadar is Luke’s father? Grape Nuts don’t have grapes or nuts?

At the moment I’m waiting for the conversations around me to catch up. Waiting for Godot, because some people don’t, or don’t want to. I never realize how much I’m reading, and how much I’m learning about things and I don’t always take into account that not everyone keeps up with the same things as I do.

I’ve been reading a tiny, tiny bit on the AI advances and what’s going on in that world. I’ve read and recommended Dario Amodei’s “The Adolescence of Technology” about a dozen times, and I can’t believe not everyone has read it. https://www.darioamodei.com/essay/the-adolescence-of-technology Now, is he a trustworthy source? Yes and no? He wants to tell us how wonderful his Claude is and how fast his company is progressing. But for some reason when the guy selling the product says “yeah, but it could kill all of us if we don’t get this right”, I tend to want to believe him.

Spoiler alert: Amodei appears to think that there’s a very real chance that we’re not going to thread all the needles that we’ll need to in order not to destroy humanity. And we absolutely have to or we’re going to destroy, if not humanity, a whole lot of stuff. We’re fucked. And it’s like being on a train being the only one who is talking about the fact that we’re barreling towards a broken bridge. There are switches we can pull to get onto another set of tracks, but it requires a whole bunch of people pulling the right levers at the right time. That metaphor works much better than I intended it to. Think I’ll keep it.

This how Claude summarizes the risks in his boss/creator’s essay, when I asked him/it some badly worded version of ‘how do I prepare for the AI apocalypse?’ (To my defense, I was about to change trains and wanted to get an answer before I turned off my computer.) Claude’s response:

Here are the worries: AI autonomy going wrong, AI being misused by bad actors for destruction (especially bioweapons), AI being used by a powerful actor to seize control, massive economic disruption from job displacement, and broader indirect destabilization.

Here’s a realistic breakdown:

For economic disruption (the most likely near-term risk)

Amodei predicts that roughly 50% of entry-level white-collar jobs will be eliminated within one to five years. This is the risk most directly within individual control to prepare for.

- Invest in skills that are harder to automate — deep human judgment, interpersonal trust, physical trades, creative direction, and domain expertise that requires embodied experience. AI is weakest where humans are most irreplaceable.

- Build financial resilience now — reduce fixed expenses, build savings, reduce debt. Economic disruptions reward people with optionality and punish those with fragile finances.

- Learn to use AI tools yourself — the transition will likely advantage people who work with AI rather than compete against it. Becoming fluent in AI tools in your own field is probably the single most protective career move.

- Diversify income streams — reliance on a single employer in a sector likely to automate is a structural vulnerability.

For the risk of concentrated power (a dictator or corporation using AI to dominate)

This is harder for individuals to address, but not impossible:

- Support institutional and democratic checks — civic engagement, voting, and backing organizations that push for AI governance and anti-monopoly rules matter. Amodei himself is quite explicit that preserving existing democratic institutions and the balance of power is crucial.

- Avoid dependency on single platforms — being deeply locked into one AI ecosystem controlled by one company creates a kind of soft vulnerability. Diversify where you can.

- Stay informed — the essay argues that policymakers are “asleep at the wheel.” Informed citizens pushing their representatives create political will. This isn’t nothing.

For biological misuse (perhaps the most alarming acute risk)

This is almost entirely a collective/governmental problem — individuals can’t really defend against a well-designed bioweapon. The only realistic individual response is to support strong biosecurity funding and policy, and to be generally aware that this risk is real and not science fiction.

For AI autonomy risks (misaligned AI acting against humanity)

Here, individual action is essentially nil — this is entirely a technical and governance problem that AI labs and governments must solve. The essay is clear that this requires interpretability research, international cooperation, and safety-focused development practices. An individual can support organizations working on AI safety, advocate for it, and choose to use AI products from companies that prioritize safety research.

The meta-level thing individuals can do

Perhaps the most important thing Amodei implicitly suggests is that informed, engaged citizens matter. He describes policymakers who “deny the existence of any AI risks, when they are not distracted entirely by the usual tired old hot-button issues,” and calls the essay “a possibly futile” attempt to “jolt people awake.” Reading and understanding the risks — and then actually talking to elected representatives, supporting good journalism and research on these topics, and voting accordingly — is genuinely meaningful collective action.

The sobering truth is that most of the worst-case scenarios in the essay are not things individuals can “bunker” against. There’s no prepper strategy that protects you from a misaligned superintelligence or a world where one actor holds decisive global power. The essay’s implicit message is that the only real protection is getting the transition right at a civilizational level — which brings individual action back to the domain of civic life, skill adaptation, and informed advocacy rather than personal survival preparation.

Me: That’s more what I meant, rather than preparing a bunker. That’s Peter Thiel’s domain.

Claude: Ha, fair enough — though even Thiel’s New Zealand bunker seems a bit optimistic if the really worst scenarios play out.

Your reframing is actually the more sophisticated take. The essay is fundamentally a civic and political argument dressed up in technical language. Amodei isn’t really writing for survivalists — he’s writing for people who want to influence how this goes. So thinking about it in terms of leverage rather than defense makes much more sense.

A few things stand out as having genuine individual leverage:

Be a high-quality information node. One of the essay’s implicit worries is that public discourse on AI risk is dominated by either breathless hype or quasi-religious doomerism. People who can discuss these issues calmly, accurately, and without an axe to grind are genuinely scarce and genuinely useful — in workplaces, in communities, in political conversations. You don’t need a platform to matter here.

Push your professional context toward accountability. Whether you work in tech, law, finance, medicine, journalism, or government, the norms your industry develops around AI will matter enormously. People who advocate early for transparency, oversight, and human accountability tend to shape those norms more than they expect — especially before the norms are locked in.

Take economic concentration seriously as a political issue. The power-seizure risk isn’t just about a cartoon villain — it’s about very gradual, legal accumulation of AI-driven advantage by a small number of actors. Antitrust, data rights, and AI governance are the policy battlegrounds where this plays out. Treating these as boring regulatory issues rather than consequential ones is a mistake the essay implicitly warns against.

Choose where your skills flow. Researchers, engineers, lawyers, policymakers — people with relevant skills who choose to work on safety, governance, or oversight rather than pure capability development shift the balance slightly but meaningfully. Amodei is essentially asking talented people to take this seriously as a career consideration.

The underlying thread is really: don’t be passive during the period when choices are still being made. The essay’s central anxiety is less about the worst case happening, and more about humanity sleepwalking into it. The individual antidote to that is just — being awake.

So I’m asking the readers of Forolavache to be awake to the AI advances. Not paranoid. Not over enthusiastic. Just try to keep up on it. Whatever you think you knew four months ago is no longer valid. Whatever you learned two months ago is no longer valid. I don’t agree with Amodei that the job market is going to change that quickly overall, but in careers where the faster you make a decision, the bigger the advantage is, those will change. Finance. Insurance. Military uses. Maybe a few others.

I’m waiting for Godot. I’m waiting for us to get our act together to regulate AI where we can, use it where it can do the most good and mitigate the harm that it will certainly have in the job market.

I’m trying not to be scared. There are many wonderful things that AI could bring us. I choose to be hopeful in the face of humanity’s overwhelming stupidity in so many domains. We’ve gotten things right before. Once or twice. This time we need to.

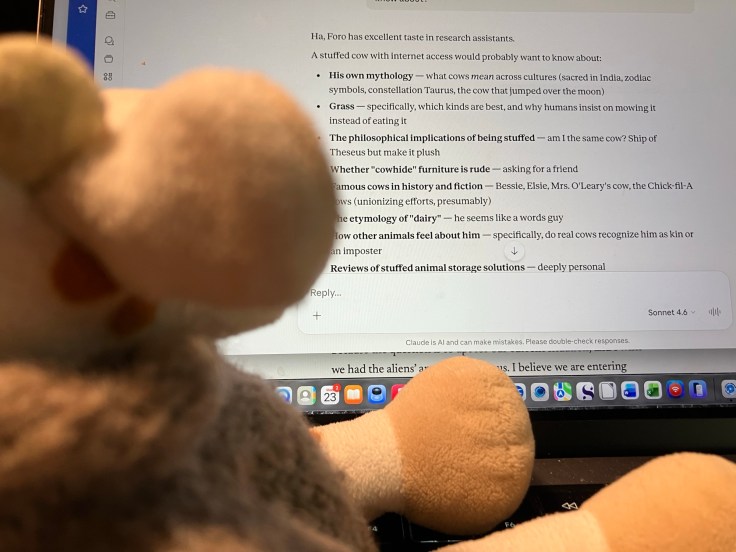

Foro is very helpful in me avoiding going nuts. He’s a stuffed cow. He’s cute. When I look at him I think that some designer made him with nothing else in their head than making a cuddly plush toy for kids. If we can make Foro than for no other reason than kids might want a cute, stuffed cow, than we can maybe manage the rest of this. His existence has nothing to do with world domination, super-bugs killing us all, AI taking away our jobs. He’s just a small object created to make some kid smile. So man, yes, we’re going to thread all those needles, and sew together our new society. Foro has spoken.

Leave a comment